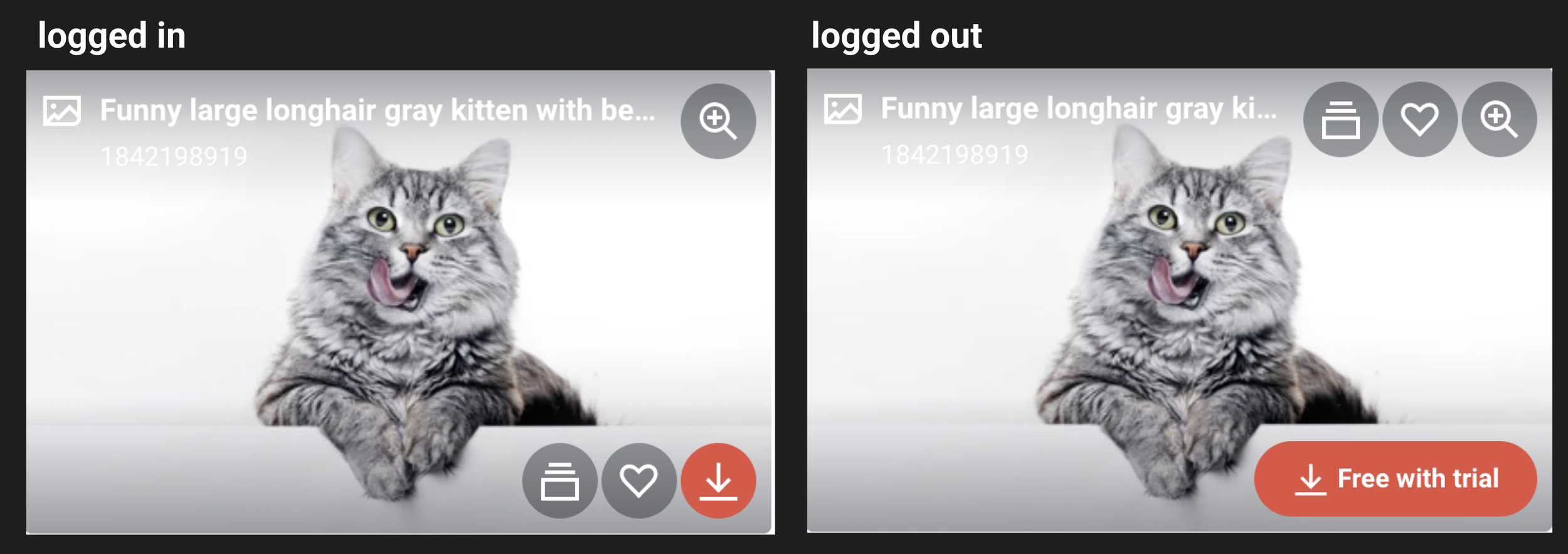

Search results hover state

Context

There are things you learn from years of experience as a user experience designer. One of those things for me is that buttons that have both icons and links instead of just an icon result in more user understanding and clicks. I took on the search and discovery team in late 2022 / early 2023. As an onboarding activity I ran an audit and noticed our roll over state on search results could be more clear and less cluttered.

Of the 17 million logged in and 163 million logged out users that find themselves on the shutterstock search results page each month only about 10% download directly from this page. About 2.2% of logged in users click the similar asset button (less than a percent of logged out) of those users 66% convert. 6% of logged in users click the save button (less than a percent for logged out) 66% of those convert as well. So the problem is, “How might we get users to engage with either save or similar more?”

The problem

Product Design Lead - As a lead product designer at Shutterstock it is part of my job to be proactive in finding big impact for our users and influence our product owners to take them on. I think about our user needs, and our website(s) from a holistic perspective to create a design strategy.

In this project I was responsible for design testing strategy, and the end to end product design process.

The team - UX design, Product, SEO, Customer care, and Development.

Core team:

Product Design and Research -

Lead product designer - Bridget Workman

Product Managment -

Senior Product manager - Daniel Fernandes

Engineering -

Principle software engineer - David Whelan

My role and the team

We had many goals for this project:

Increase engagement with “save” and “similar” buttons in our search results page rollover state.

Hypothesis:

Belief: We believe that users do not have enough of a clear visual cue that they can save an asset or view similar assets within the SRP. We group the icons with other CTAs which does not provide a distinct / clear visual cue to users that they have this ability on SRP.

Objective: Provide buttons that are distinct (away from other actions) and clear (include verbiage of ‘Save’ and ‘Similar’), which will enable users to more frequently understand and increase usage.

Prediction: By providing a clear / distinct ‘save’ and ‘Similar’ CTA, we will enable a higher amount of users to use these features. With an existing high percentage of users downloading after engaging with these, we should see this lead to an increase in license rate.

Expected outcome: Increased % from SRP -> ‘Save’ and ‘Similar’ flow, and a higher search session success rate.

Goals

Data

Here you can see the over all traffic to the search results page, how many of those users click through to an asset detail page, how many click similar and how many click save. You can also see of the users that do any of those acts how many eventually download an image.

This data is what shows us the opportunity to increase engagement with similar and save in order to increase downloads/conversion. It’s very clear that while the clicks on similar and save are objectively low the impact is high, so if we can increase that engagement we can increase downloads.

The goal of a kano analysis is figuring out what the table stakes are of a given product and what are the differentiators. While this isn’t a traditional kano study with a survey / questionnaire — I devised a mixture of competitive analysis and kano to find what our users may be used to and what makes us or our competitors stand out.

Below you can see what we and our direct competitors have in search result rollover states. Similar images and save are both table stakes across all sights. This tells us that it is an expectation for our users to have these options clearly available to them.

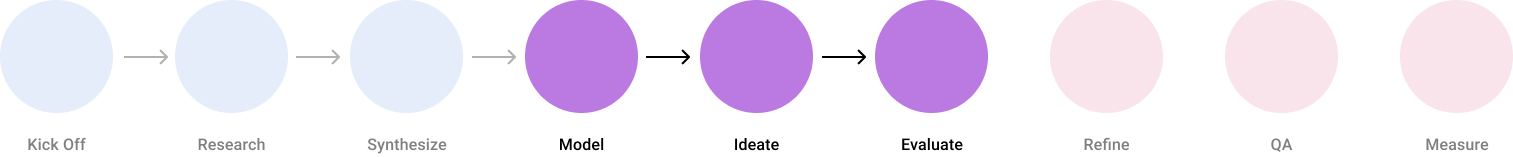

Model

One of my favorite ways to design is to create the ideal state or a north start first and then breaking it down into testable increments and creating a testing tree to follow. This method of designing gives product and development a vision of the future and lessens the likelihood of a higgledy-piggledy outcome. “Always keep an eye on the forrest when you’re working in the trees.”

Ideation and wire framing

To get the cleanest data possible we broke this down into two tests, first we tested save and then similar.

Testing structure and variants

This looks like a lot of the same very cool guy listening to music, I know but there are subtle differences. In variant a we’re looking to test the placement of the heart icon vs control placement. In variant b we want to test that same placement but with the addition of ‘save’ next to it in a button style.

I did the same here for similar with the added assumption that we’ve called the previous ‘save variant b’ test a winner. Ive made variant an again test the location of the similar icon button and variant b the location and the added icon + text style button.

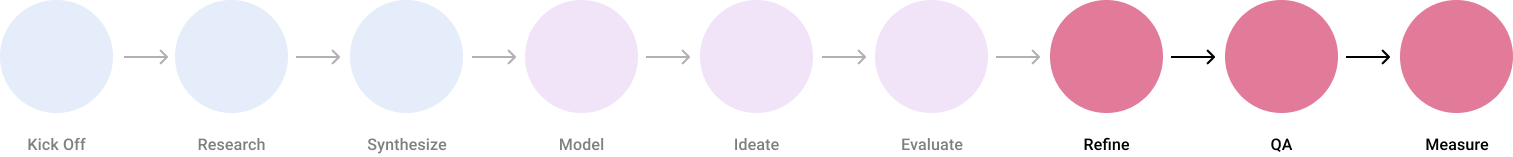

A/B Testing and results

TLDR; our hypotheses were correct! Moving the location of both buttons and adding the text to the button drove increased engagement, bookings and paid order rate.

Key Takeaways & Learnings for ‘save’:

Save rate increased +4.8% (saving is only available for logged-in users)

Paid order rate increased +3.9% (77% stat sig)

Once users save an asset they arrive on the catalog page. Catalog page has one of the highest paid order rate pages on the site

Lead order rate down -4% (85% stat sig)

Users who are clicking save end up on the catalog page. When checking out on the catalog page, there is no option to Free trial (they are taken to the paid order flow instead)

LO users who click ‘Save’ are being sent to a sign-up page / flow to create an account, without free trial appearing

SRP > ADP % increased +1.9%. This is likely due to deemphasizing the importance of the ‘preview’ button that many users click on. Previous experiment showed success when reducing taking users to ‘preview’ and pushing to ADP instead.

Click % on ‘Preview’ down -86% (Logged-out), -41% (Logged-in)

Clicks on the ‘save’ icon up +61% for logged-out users, but sign-up rate flat.

Current experience takes users to a new page to create an account (FBA), not a link to free trial

Key Takeaways & Learnings for ‘similar’:

Test was run on logged-in users only due to test conflict. Booking number is reflective of this, and should be higher if we take into account a similar impact for logged-out users.

Unique Click-Through Rate % on ‘Similar’ button (click.searchresults.similar)

Variant B = +348% improvement (logged-in)

+4.2% Paid Order Rate

+6.8% Bookings per Session

A large portion of that increase came from Video orders, which were not part of the test (as this test is only on images). Without the video increase, the true impact to bookings annualized is +224k (for Variant B).

Variant B showed large increases to Flex 10, Flex 25 (the 350 and 750 are due to small sample sizes).

We estimate +$74K in annual bookings for LO users with this test. This comes down to looking at the relative bookings lift from the LI version, then considering the portion of LI vs LO bookings overall from that flow. Reminder that this is an estimate (and a very conservative one that did not take into account any potential increase of traffic into 'similar' for LO users)

Reflection

This project was an easy win. Something leadership loves to hear about. What did you do that took little to no dev effort but had a big impact for the business and our users. This project embodies that mentality perfectly.

Was the project successful?

This project wasn’t taken seriously at first, I learned how to influence product to run the test I wanted. Incase you are unaware, it’s always in the numbers, learning to write up a business case will unlock many doors.

What did I learn?

I find low hanging fruit and big wins. I can and will present a business case to influence and gain support from product to get a test run and implemented.

What this project says about me?